One of the most fascinating things about Star Trek is how prescient it can be when it comes to technology. One era’s science fiction becomes another’s science fact, often in surprising ways. The Star Trek: Voyager episode “Author, Author”, in which the Doctor, an artificial intelligence, fights for creative control of his holonovel, was written in 2001. No one could have known that 22 years later, the Writers’ Guild of America would launch a 148-day strike that, among other things such as more financial security, won them the right not to have AI write their scripts for them. From this modern perspective, does that make the Doctor the “bad guy” in this story? Or, role reversal aside, does the episode’s message that creative rights are human rights still hold true?

Today’s AI writing programs, such as ChatGPT, work by absorbing billions of existing texts, identifying patterns in them, and using them to generate new text in response to user prompts. Similarly, the Doctor’s holonovel, Photons, Be Free, is based on a data set of his own memories: it’s the story of an Emergency Medical Hologram on a thinly disguised version of Voyager, asserting his equality to his flesh-and-blood shipmates. Some of the incidents he writes about, while exaggerated for drama, have some truth in them: Captain Janeway really did once kill a crewmember (“Tuvix”) and rewrite the Doctor’s program without his consent (“Latent Image”). Others, such as Lieutenant “Marseilles” (Paris) cheating on his wife, are the Doctor’s invention, probably based on other stories he has read. When the real Voyager officers play through the novel, they are understandably outraged to see versions of themselves behaving immorally, much like we would be today by an AI using our likeness for deepfakes. By turning the tables on the Doctor with a scathing parody that shows him abusing his patients, Paris teaches the amateur author how hurtful such exploitative storytelling can be.

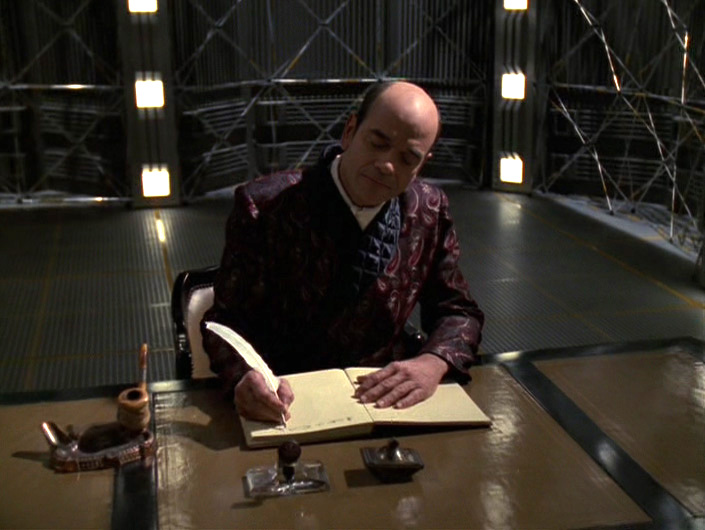

This, however, is where the story diverges from what we hear about AI today. Programs like ChatGPT do not have the capacity to care if their writing hurts people, but the Doctor does. He apologizes to his shipmates and calls Broht, his publisher, to ask for extra time to edit his novel, only to find out that the offensive first draft has already been published without his knowledge or consent. When the Doctor declares his right as an artist to recall the work, Broht replies that holograms are not people, and therefore cannot be artists. It sounds like the same argument that opponents of AI are making today, but it’s coming from the opposite perspective. Broht is not a creator whose livelihood is at risk; he is a businessman who cares more about profit and fame (he brags that Photons, Be Free is running in “thousands of holosuites”) than about his authors or the quality of their writing – the same attitude that provoked the 2023 strikes.

The real issue is not whether AI should be allowed to make art, but how to ensure that all artists have what they need to do their best work. In the episode, the Doctor and his shipmates call for a legal hearing, which ends with the arbitrator’s decision to “extend the legal definition of “artist” to include the Doctor, and therefore (…) he has the right to control his work”. In real life, the WGA strike was resolved with a contract that, among other things, raised minimum pay, increased financial security, set staff room requirements to give more opportunities to new writers, and according to TV writer Dylan Guerra, “[took] AI from the existential and [turned] it into (…) a software we can choose to use if we want or not.” Contracts like this set a precedent for the future. If, during the Doctor’s hearing, everyone present takes it for granted that the definition of “artist” includes the right to creative control, we can imagine it’s because of centuries of legal battles like last year’s. The more respect we give our flesh-and-blood artists, the easier it will be to accept those who are not.